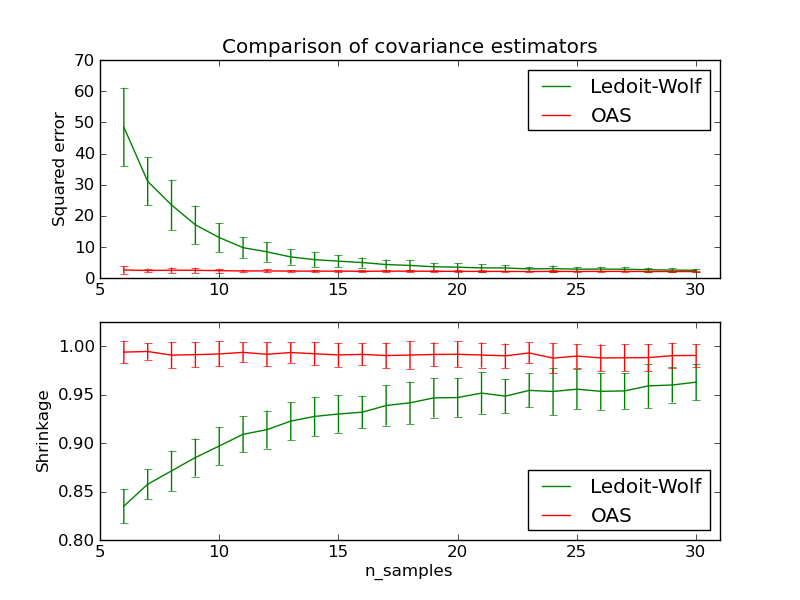

Ledoit-Wolf vs OAS estimation¶

The usual covariance maximum likelihood estimate can be regularized using shrinkage. Ledoit and Wolf proposed a close formula to compute the asymptotical optimal shrinkage parameter (minimizing a MSE criterion), yielding the Ledoit-Wolf covariance estimate.

Chen et al. proposed an improvement of the Ledoit-Wolf shrinkage parameter, the OAS coefficient, whose convergence is significantly better under the assumption that the data are gaussian.

This example, inspired from Chen’s publication [1], shows a comparison of the estimated MSE of the LW and OAS methods, using gaussian distributed data.

[1] “Shrinkage Algorithms for MMSE Covariance Estimation” Chen et al., IEEE Trans. on Sign. Proc., Volume 58, Issue 10, October 2010.

Python source code: plot_lw_vs_oas.py

print __doc__

import numpy as np

import pylab as pl

from scipy.linalg import toeplitz, cholesky

from sklearn.covariance import LedoitWolf, OAS

###############################################################################

n_features = 100

# simulation covariance matrix (AR(1) process)

r = 0.1

real_cov = toeplitz(r**np.arange(n_features))

coloring_matrix = cholesky(real_cov)

n_samples_range = np.arange(6, 31, 1)

repeat = 100

lw_mse = np.zeros((n_samples_range.size, repeat))

oa_mse = np.zeros((n_samples_range.size, repeat))

lw_shrinkage = np.zeros((n_samples_range.size, repeat))

oa_shrinkage = np.zeros((n_samples_range.size, repeat))

for i, n_samples in enumerate(n_samples_range):

for j in range(repeat):

X = np.dot(

np.random.normal(size=(n_samples, n_features)), coloring_matrix.T)

lw = LedoitWolf(store_precision=False)

lw.fit(X, assume_centered=True)

lw_mse[i,j] = lw.error_norm(real_cov, scaling=False)

lw_shrinkage[i,j] = lw.shrinkage_

oa = OAS(store_precision=False)

oa.fit(X, assume_centered=True)

oa_mse[i,j] = oa.error_norm(real_cov, scaling=False)

oa_shrinkage[i,j] = oa.shrinkage_

# plot MSE

pl.subplot(2,1,1)

pl.errorbar(n_samples_range, lw_mse.mean(1), yerr=lw_mse.std(1),

label='Ledoit-Wolf', color='g')

pl.errorbar(n_samples_range, oa_mse.mean(1), yerr=oa_mse.std(1),

label='OAS', color='r')

pl.ylabel("Squared error")

pl.legend(loc="upper right")

pl.title("Comparison of covariance estimators")

pl.xlim(5, 31)

# plot shrinkage coefficient

pl.subplot(2,1,2)

pl.errorbar(n_samples_range, lw_shrinkage.mean(1), yerr=lw_shrinkage.std(1),

label='Ledoit-Wolf', color='g')

pl.errorbar(n_samples_range, oa_shrinkage.mean(1), yerr=oa_shrinkage.std(1),

label='OAS', color='r')

pl.xlabel("n_samples")

pl.ylabel("Shrinkage")

pl.legend(loc="lower right")

pl.ylim(pl.ylim()[0], 1. + (pl.ylim()[1] - pl.ylim()[0])/10.)

pl.xlim(5, 31)

pl.show()